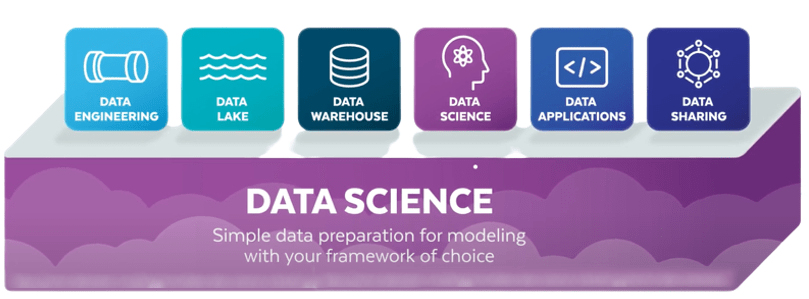

Snowflake is a cloud-based data warehouse that provides scalable and flexible storage for data, making it an ideal platform for data science workloads.

The Snowflake Data Science platform is designed to integrate and support the applications that data scientists rely on a daily basis. The distinct cloud-based architecture enables Machine Learning innovation for Data Science and Data Analysis.

Platforms for Data Science are essential tools for Data Scientists. It allows for the exploration of data, the development of models, and the distribution of models. They also make data preparation and visualization easier while also providing a large-scale computing infrastructure.

Also, Data Science platforms include APIs that allow for model production and testing with minimal outside engineering requirements.

In this blog, we will cover the importance of snowflake for data science and some best practices using Snowflake for data science workloads.

Scalability:

Snowflake is a cloud-based data warehouse that can scale up or down to meet the needs of any data science workload.

It can handle large amounts of data and support high-performance analytics, making it an ideal platform for data science workloads.

Snowflake supports multiple programming languages, including SQL, Python, and R, allowing data scientists to use their preferred tools and techniques.

It also supports a variety of data formats, including structured, semi-structured (JSON, Avro, ORC, Parquet, or XML), and unstructured data, making it a flexible platform for data science workloads.

Snowflake is designed for high-performance analytics, with automatic query optimization and caching features that help improve query performance.

It also supports clustering keys, which can be used to improve the performance of frequently accessed data.

Snowflake allows multiple users to work on the same data and share insights, making it a collaborative platform for data science workloads.

It also supports data sharing between organizations, allowing data scientists to access external data sources and collaborate with other organizations.

Snowflake provides advanced security features, including encryption, access controls, and audit logging, that help protect sensitive data and ensure compliance with data privacy regulations.

Overall, Snowflake’s scalability, flexibility, performance, collaboration, and security features make it an ideal platform for data science workloads.

Data scientists can use Snowflake to load and transform data, run machine learning models and other data science algorithms, and derive insights that can drive business success.

Best practices using Snowflake for data science workloads:

Here are a few important aspects of Snowflake in Data Science

Data discovery is the first step in developing any ML model. Data scientists must gather or collect all available data relevant to the ML application at hand during this phase. Gathering data becomes trivial if all of your data is already in Snowflake.

Ad-hoc analysis and feature engineering are simple with the Snowflake UI or Snow SQL.

The Snowflake Connector for Python excels at extracting data to an environment where the most popular Python data science tools are available.

When it comes to model training, the most important feature that Snowflake offers is, access to data.

Snowflake, in addition to using your own data, can provide you with access to external data via its Data Marketplace.

Reliable training and maintenance of ML models necessitate a reproducible training process, and lost data is a common issue for reproducibility. Snowflake’s time travel features can come in handy here.

Due to its limited retention period, time travel will not support all use cases, but it can save us from a lot of difficulties for early prototyping and proof of concept projects.

With the release of Snowpark and Java user-defined functions, Snowflake’s support for ML model deployment has greatly improved (UDFs).

UDFs are Java (or Scala) functions that take Snowflake data as input and generate a value based on custom logic.

The distinction between UDFs and Snowpark is subtle. Snowpark itself provides a mechanism for handling tables in Snowflake from Java or Scala in order to perform SQL-like operations on them. This is distinct from a UDF, which is a function that produces an output by operating on a single row in a Snowflake table.

Snowflake Scheduled Tasks can be a useful orchestration tool for tracking ML predictions.

Monitor complex issues like data drift by scheduling tasks that use UDFs or building processes with Snowpark.

When problems are discovered, any analyst or data scientist can use the Snowflake UI to understand deeper and figure out what’s going on.

Dashboards based on Machine Learning predictions can also be created using the Snowflake connector or integrations with popular BI tools such as Tableau.

Tejaswini Halmare

Data Engineer

Boolean Data Systems

Tejaswini Halmare works as a Data Engineer at Boolean Data Systems. She is a certified Matillion Associate who has built many end-end ML/DL Data Science solutions. Her experience includes working with ML/DL, Snowflake, Matillion, Python, Streamlit.

Conclusion:

The cloud has largely enabled massive leap to advanced analytics. Companies can collect, store, and analyze more data than ever before, and with graphics processing unit (GPU), Accelerated computing, they can train multiple ML models concurrently in minutes and then select the most accurate ones to deploy.

Snowflake has become one of the most sought-after Cloud Computing platforms in the Data Science field due to its popularity among enterprises and having hands-on experience gives you an advantage in the Data Science race.

About Boolean Data

Systems

Boolean Data Systems is a Snowflake Select Services partner that implements solutions on cloud platforms. we help enterprises make better business decisions with data and solve real-world business analytics and data problems.

Services and

Offerings

Solutions &

Accelerators

Global

Head Quarters

1255 Peachtree Parkway, Suite #4204, Alpharetta, GA 30041, USA.

Ph. : +1 678-261-8899

Fax : (470) 560-3866